Description

Introduction

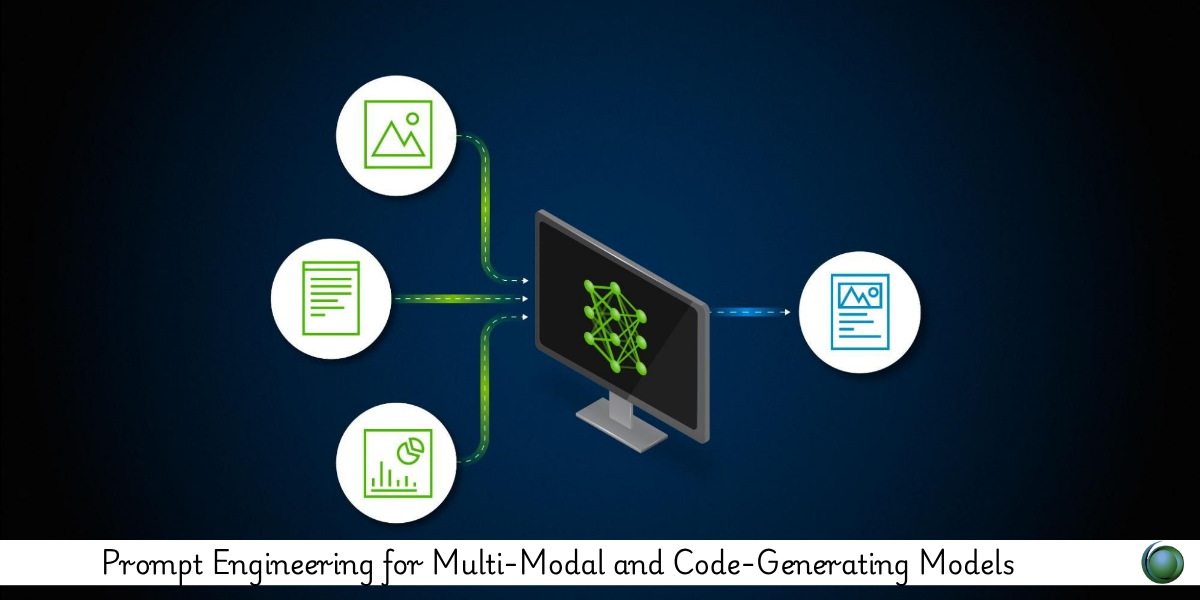

As AI evolves beyond text, prompt engineering now extends to models capable of interpreting images, generating code, or reasoning across modalities. This course introduces best practices for crafting prompts that guide multi-modal and code-generating models like GPT-4, Gemini, Claude, and Copilot. Learners will gain hands-on strategies to structure inputs that yield coherent, relevant, and accurate outputs across formats.

Prerequisites

Basic to intermediate experience with LLMs or prompt engineering

Familiarity with code (e.g., Python, JavaScript) and multi-modal content formats

Access to multi-modal and/or code-focused AI tools (e.g., GPT-4, GitHub Copilot, Claude)

Table of Contents

-

Overview of Multi-Modal and Code-Generating Models

1.1 Capabilities of GPT-4, Gemini, Claude, and Copilot

1.2 Differences in Text, Image, and Code Processing

1.3 Model Selection Based on Use Case -

Foundations of Multi-Modal Prompting

2.1 Structuring Prompts for Mixed Input Types

2.2 Referencing Image Content and Descriptions

2.3 Techniques for Visual Question Answering (VQA) -

Prompting Code-Generating Models

3.1 Effective Prompts for Code Synthesis

3.2 Handling Boilerplate vs. Complex Logic

3.3 Instructing Models for Comments, Refactoring, and Tests -

Image-to-Text and Text-to-Image Prompting

4.1 Prompting for Visual Summaries or Captioning

4.2 Embedding Visual Hints in Prompts

4.3 Generating Text Descriptions for Design, UI, or Diagrams -

Prompting for Code Explanation and Debugging

5.1 Requesting Step-by-Step Code Breakdown

5.2 Language-Agnostic Prompting for Cross-Language Understanding

5.3 Debugging and Error Interpretation Prompts -

Working with Tables, Charts, and Structured Data

6.1 Prompt Formats for Reading and Interpreting Tabular Data

6.2 Multi-Modal Reasoning with Mixed Data Inputs

6.3 Visual Analytics Generation from Natural Language -

Prompt Chaining for Multi-Modal Tasks

7.1 Sequential Prompts Across Modalities (e.g., Image to Code to Text)

7.2 Output Reuse and Prompt Continuity

7.3 Hybrid Workflows: Combining LLMs and APIs -

Evaluation and Testing of Prompted Outputs

8.1 Accuracy and Context Sensitivity Metrics

8.2 Human-in-the-Loop Review Strategies

8.3 Common Failure Modes in Multi-Modal or Code Tasks -

Use Case Applications

9.1 Education: Code Tutors and Diagram Analysis

9.2 Software Development: Copilot and LLM Pair Programming

9.3 Content Creation: Mixed Media and Storyboarding

9.4 Research: Annotated Images and Code Simulation -

Ethical Considerations and Prompt Security

10.1 Handling Sensitive Inputs (Code, Images, PII)

10.2 Preventing Code Injection or Hallucination

10.3 Maintaining Accountability in Automated Workflows

Prompting for multi-modal and code-generating models unlocks advanced capabilities across AI applications—from automating development tasks to interpreting visual data. By mastering how to guide these models with clear, targeted, and structured prompts, professionals can drive innovation across domains where language meets code and imagery.

Reviews

There are no reviews yet.